Nuclear Technologies and National Security

Nuclear Science and Engineering

NSEThe Nuclear Science and Engineering Division advances the design and operation of nuclear energy systems and applies nuclear energy-related expertise to current and emerging programs of national and international significance.

The Nuclear Science & Engineering (NSE) Division participates in key U.S. Department of Energy (DOE) nuclear energy and national security initiatives, including leading the nation’s program for development and demonstration of advanced reactor technologies that promise to improve the affordability of nuclear power, enhancing the assurance of safety and security and minimizing the discharge of radioactive waste.

The division has five primary focus areas, many of which leverage other sectors of Argonne’s expertise:

- Reactor and Fuel Cycle Physics

- Advanced Reactor Design and Safety Analysis

- Sensors, Instruments, and Diagnostics

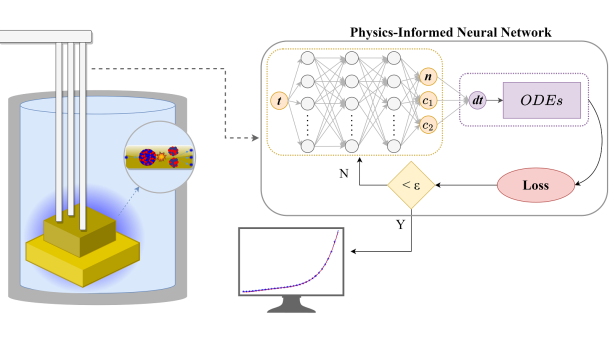

- Nuclear Engineering Modeling and Simulation

- Nuclear Materials Management and Nonproliferation