Highlights

Reprinted from “Argonne Now” - Spring 2008

Physicist Won-Sik Yang and computer scientist Andrew Siegel hold a fuel rod assembly in front of a model of the Experimental Breeder Reactor-II, a landmark reactor built in 1964 at the old Argonne-West site in Idaho and operated for 30 years. (Click on photo to view a larger image.

Computer simulations help design new nuclear reactors

When it was founded in 1946, Argonne was charged with developing the technology to enable peacetime uses of nuclear energy. Now, more than 60 years later, Argonne again stands at the forefront of nuclear research as it brings its new high-performance computing facilities to bear on reactor design, enabling safer, cheaper and more efficient generation of electricity.

A fast-neutron nuclear reactor is called “fast” for a reason. The free neutrons in the reactor chamber travel at average speeds of more than 5,000 kilometers per second until they slam into uranium or other heavy nuclei, triggering the fission reaction that creates nuclear energy. But with an enormous number of neutrons zooming around and colliding with other particles, scientists have, until now, lacked the ability to tackle reactor dynamics from the ground up.

“At the fundamental level, we have a very good understanding of the neutron physics of a nuclear reactor, but we've never been able to represent all of its details at once,” said Hussein Khalil, director of Argonne's Nuclear Engineering Division. “We need to resort to some models or equations that simplify the situation, but when you do that, you introduce errors and can't be completely confident that your model represents reality.”

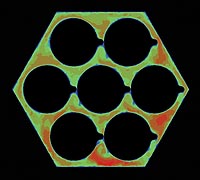

Two views of heat flow around a seven-pin fuel rod assembly. The redder colors (top) and brighter yellows (bottom) indicate regions of faster coolant flow through the assembly as it carries away the heat produced by the fuel pins in the reactor core (Click on photo to view a larger image.

Computer models of reactors help to bridge the gap between conception and operation of new nuclear facilities. By using mathematical algorithms to process information—the reactor's geometry, composition, measured physical properties and targeted operating conditions—these models can predict how different reactors will function.

Because scientists cannot forecast exactly how a reactor will respond to variations in its configuration or the conditions under which it is operated, every nuclear facility has to be designed and operated with large safety margins, which reduces the efficiency of the plant. “The traditional tools have large uncertainties,” said Andrew Siegel, an Argonne computational scientist and physicist who works at the nexus of the two disciplines. “These early models were coarse, and they represented only certain fundamental physics with a lot of assumptions and idealizations.”

These old models were developed during the second heyday of nuclear research in the late 1970s and early 1980s. Back then, the stud of the supercomputing industry was the CRAY-1, a five-and-a-half-ton behemoth that could perform 100 million calculations (known to computer scientists as floating-point operations, or FLOPS) per second.

That kind of computing power far surpassed anything else available at the time, and nuclear scientists were among the first to requisition the CRAY -1's muscle for simulation work. As nuclear research fell out of favor with government and academic institutions that rationed supercomputing resources, however, other fields of study—such as astronomy and meteorology—replaced nuclear research as the preeminent uses of the available hardware. As a result, Siegel said, nuclear engineers today still have to rely on many of the design tools that resulted from work done on the CRAY-1 and machines with similar capabilities.

Although the CRAY -1's 100 megaflops capacity represented a remarkable accomplishment for the time, the nearly 30 years that have passed have seen the development of supercomputers that have made the CRAY-1 as scientifically relevant as an abacus. Last fall, Argonne became the new home of the IBM Blue Gene® /P super-computer, which has the potential to perform at speeds of up to one petaflop—or one quadrillion calculations per second—representing a ten-million-fold improvement over the CRAY-1.

Argonne's newly acquired access to petascale-capable hardware, combined with three decades of accumulated scientific expertise, will revolutionize how scientists and engineers model nuclear reactors, Siegel said.

“Now, petascale computing allows us to create models that can explicitly represent a reactor's geometry,” he said. “For the first time, we can resolve a great deal of the detail of what's happening in a reactor core—it's a true paradigm shift.”

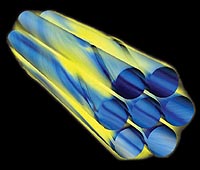

The core of a fast reactor consists of an assembly of stainless steel pins, which contain thin cylindrical rods of fuel composed of uranium or plutonium metallic alloys or oxides. Once the reactor gets going, these pins become extremely hot. In order to convert this heat to electricity and to prevent melting of the stainless steel, thousands of gallons of coolant—typically sodium— are washed over the pin bundles.

Altering the geometry of the pins even slightly, however, affects the neutron chain reaction and changes the dynamics of coolant flow and heat transfer. Because of the expense and potential safety concerns of testing different pin configurations experimentally, Siegel and his colleagues use computer simulations to model how the coolant flows around and between individual pins.

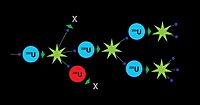

This diagram of nuclear fission shows a free neutron smashing into a uranium-235 atom, which releases atomic energy as it splits into large fission products and other free neutrons, which can then smash into other uranium-235 atoms, creating a chain reaction. Occasionally these neutrons hit non-fissile material, like uranium-238. (Click on photo to view a larger image.

Reactors convert the uranium and plutonium inside each pin into energy through nuclear fission, which, when viewed at the atomic level, looks very much like a game of high-speed billiards. The “cue balls”—free neutrons—zoom around at mind-boggling speeds until they smack into uranium or other heavy nuclei. Instead of caroming off in a new direction, however, the nuclei absorb the free neutrons before splitting into a couple of lighter isotopes and more free neutrons, releasing energy. The newly freed neutrons then smash into other nuclei, continuing the chain reaction.

Millions of these collisions happen every microsecond in a fast-neutron reactor, and even with petascale-computing capabilities, nuclear scientists could not hope to represent every single one. Older models dealt with this problem not by analyzing discrete atomic events, but by treating them as continuous processes; this approach involved many assumptions and idealizations that simplified the physical realities, according to Khalil.

“What we hope to do with the more powerful computers,” he said, “is actually begin the simulation at a very fundamental scale by building the model from the atomic level where the interactions are taking place. Obviously, we can simulate this degree of detail only for a very small portion of the system, but the hope is that we can then make use of this information to create a less- detailed—yet valid—model for the entire system.” This research is funded by the U.S. Department of Energy's Office of Nuclear Energy to promote secure, competitive and environmentally responsible nuclear technologies to serve the present and future energy needs of the nation and the world.

To carry out this vision, Argonne's scientists base their neutron physics models on one of two types of mathematical algorithms: the Monte Carlo method or the deterministic method, according to Argonne nuclear engineer Won-Sik Yang. The Monte Carlo method, in its simplest form, involves the use of random mathematical snapshots of neutrons in the reactor, which are then integrated into a larger model of the system's behavior, a process somewhat akin to stop-motion animation.

While the deterministic method also attempts to integrate the neutron-nuclide interactions within a reactor, it does not use random numbers and will always produce the same output from given inputs. In order to remove the complexities of measured physical properties in the energy domain, deterministic models manipulate “bins” of neutrons that are grouped by energy level. Fast-neutron energies range from 1 electronvolt (eV) to 20 million electronvolts (20 MeV). Current deterministic models partition neutrons into many energy groups, the behaviors of which are calculated in several steps with different levels of spatial detail. This technique dramatically relieves the computational load while still closely describing the true character of the fission reactions, according to Yang.

“We can accurately determine the integrated qualities of the system with the Monte Carlo method,” he said, “but it is still exceptionally difficult to get the local quantities with sufficient accuracy because of the many statistical fluctuations.”

Because the Monte Carlo method relies on a stochastic approach, the move to the petascale will only slightly improve its performance in determining small localized quantities. However, the quantum leap in computing power will enable deterministic models to process a greater number of narrower energy bins and improve spatial resolution. “The goal is to eliminate approximation in proportion to advances in computing,” Yang said.

With better models, scientists like Yang and Siegel hope to reduce modeling uncertainties and operate reactors just as safely while improving efficiency and driving down cost. “The new models provide answers in which we can have much more confidence,” Siegel said. “The solutions produced by the Blue Gene/P can be directly translated into dollars saved in the operation of new reactors because we no longer have to put an enormous amount of space between what you thought the answer was and how wrong you might be.”

Khalil added, “We hope that access to this technology will allow us to design higher-performance reactors and to operate them closer to their true performance capabilities.”

In addition to shrinking needlessly large design and operation safety margins, these new, more flexible models could cut the costs of constructing and operating a nuclear facility even more by allowing researchers to substitute simulation for the once-obligatory experiments they performed to validate the results that the older, highly idealized models produced. “The problem is, when you do experiments, the parameters that you use are not universal,” Siegel said. “So if you want to change any facet of your reactor design, you have to ask yourself, ‘will my code be able to handle that, or do I have to do a new set of experiments?' With the introduction of advanced computing, for the first time the answer is likely to be that it can.”

[ More News ]

Last Modified: Wed, April 20, 2016 9:41 AM